Making data analytics work for you—instead of the other way around

Does your data have a purpose? If not, you’re spinning your wheels. Here’s how to discover one and then translate it into action.

13 min read

Source: Mckinsey

By Helen Mayhew, Tamim Saleh, and Simon Williams

Lien de l'article: https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/making-data-analytics-work-for-you-instead-of-the-other-way-around

Does your data have a purpose? If not, you’re spinning your wheels. Here’s how to discover one and then translate it into action.

The data-analytics revolution now under way has the potential to transform how companies organize, operate, manage talent, and create value. That’s starting to happen in a few companies—typically ones that are reaping major rewards from their data—but it’s far from the norm. There’s a simple reason: CEOs and other top executives, the only people who can drive the broader business changes needed to fully exploit advanced analytics, tend to avoid getting dragged into the esoteric “weeds.” On one level, this is understandable. The complexity of the methodologies, the increasing importance of machine learning, and the sheer scale of the data sets make it tempting for senior leaders to “leave it to the experts.”

But that’s also a mistake. Advanced data analytics is a quintessential business matter. That means the CEO and other top executives must be able to clearly articulate its purpose and then translate it into action—not just in an analytics department, but throughout the organization where the insights will be used.

This article describes eight critical elements contributing to clarity of purpose and an ability to act. We’re convinced that leaders with strong intuition about both don’t just become better equipped to “kick the tires” on their analytics efforts. They can also more capably address many of the critical and complementary top-management challenges facing them: the need to ground even the highest analytical aspirations in traditional business principles, the importance of deploying a range of tools and employing the right personnel, and the necessity of applying hard metrics and asking hard questions. (For more on these, see “Straight talk about big data.”1 ) All that, in turn, boosts the odds of improving corporate performance through analytics.

Would you like to learn more about our Business Technology Practice?

After all, performance—not pristine data sets, interesting patterns, or killer algorithms—is ultimately the point. Advanced data analytics is a means to an end. It’s a discriminating tool to identify, and then implement, a value-driving answer. And you’re much likelier to land on a meaningful one if you’re clear on the purpose of your data (which we address in this article’s first four principles) and the uses you’ll be putting your data to (our focus in the next four). That answer will of course look different in different companies, industries, and geographies, whose relative sophistication with advanced data analytics is all over the map. Whatever your starting point, though, the insights unleashed by analytics should be at the core of your organization’s approach to define and improve performance continually as competitive dynamics evolve. Otherwise, you’re not making advanced analytics work for you.

‘Purpose-driven’ data

“Better performance” will mean different things to different companies. And it will mean that different types of data should be isolated, aggregated, and analyzed depending upon the specific use case. Sometimes, data points are hard to find, and, certainly, not all data points are equal. But it’s the data points that help meet your specific purpose that have the most value.

Ask the right questions

The precise question your organization should ask depends on your best-informed priorities. Clarity is essential. Examples of good questions include “how can we reduce costs?” or “how can we increase revenues?” Even better are questions that drill further down: “How can we improve the productivity of each member of our team?” “How can we improve the quality of outcomes for patients?” “How can we radically speed our time to market for product development?” Think about how you can align important functions and domains with your most important use cases. Iterate through to actual business examples, and probe to where the value lies. In the real world of hard constraints on funds and time, analytic exercises rarely pay off for vaguer questions such as “what patterns do the data points show?”

One large financial company erred by embarking on just that sort of open-ended exercise: it sought to collect as much data as possible and then see what turned up. When findings emerged that were marginally interesting but monetarily insignificant, the team refocused. With strong C-suite support, it first defined a clear purpose statement aimed at reducing time in product development and then assigned a specific unit of measure to that purpose, focused on the rate of customer adoption. A sharper focus helped the company introduce successful products for two market segments. Similarly, another organization we know plunged into data analytics by first creating a “data lake.” It spent an inordinate amount of time (years, in fact) to make the data pristine but invested hardly any thought in determining what the use cases should be. Management has since begun to clarify its most pressing issues. But the world is rarely patient.

Had these organizations put the question horse before the data-collection cart, they surely would have achieved an impact sooner, even if only portions of the data were ready to be mined. For example, a prominent automotive company focused immediately on the foundational question of how to improve its profits. It then bore down to recognize that the greatest opportunity would be to decrease the development time (and with it the costs) incurred in aligning its design and engineering functions. Once the company had identified that key focus point, it proceeded to unlock deep insights from ten years of R&D history—which resulted in remarkably improved development times and, in turn, higher profits.

Think really small . . . and very big

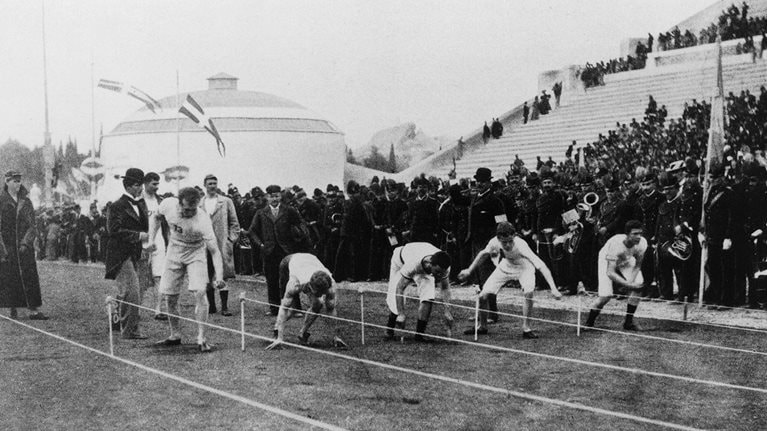

The smallest edge can make the biggest difference. Consider the remarkable photograph below from the 1896 Olympics, taken at the starting line of the 100-meter dash. Only one of the runners, Thomas Burke, crouched in the now-standard four-point stance. The race began in the next moment, and 12 seconds later Burke took the gold; the time saved by his stance helped him do it. Today, sprinters start in this way as a matter of course—a good analogy for the business world, where rivals adopt best practices rapidly and competitive advantages are difficult to sustain.

The variety of stances among runners in the 100-meter sprint at the first modern Olympic Games, held in Athens in 1896, is surprising to the modern viewer. Thomas Burke (second from left) is the only runner in the crouched stance—considered best practice today—an advantage that helped him win one of his two gold medals at the Games.

Making data analytics work for you—instead of the other way around

The good news is that intelligent players can still improve their performance and spurt back into the lead. Easy fixes are unlikely, but companies can identify small points of difference to amplify and exploit. The impact of “big data” analytics is often manifested by thousands—or more—of incrementally small improvements. If an organization can atomize a single process into its smallest parts and implement advances where possible, the payoffs can be profound. And if an organization can systematically combine small improvements across bigger, multiple processes, the payoff can be exponential.

Just about everything businesses do can be broken down into component parts. GE embeds sensors in its aircraft engines to track each part of their performance in real time, allowing for quicker adjustments and greatly reducing maintenance downtime. But if that sounds like the frontier of high tech (and it is), consider consumer packaged goods. We know a leading CPG company that sought to increase margins on one of its well-known breakfast brands. It deconstructed the entire manufacturing process into sequential increments and then, with advanced analytics, scrutinized each of them to see where it could unlock value. In this case, the answer was found in the oven: adjusting the baking temperature by a tiny fraction not only made the product taste better but also made production less expensive. The proof was in the eating—and in an improved P&L.

When a series of processes can be decoupled, analyzed, and resynched together in a system that is more universe than atom, the results can be even more powerful. A large steel manufacturer used various analytics techniques to study critical stages of its business model, including demand planning and forecasting, procurement, and inventory management. In each process, it isolated critical value drivers and scaled back or eliminated previously undiscovered inefficiencies, for savings of about 5 to 10 percent. Those gains, which rested on hundreds of small improvements made possible by data analytics, proliferated when the manufacturer was able to tie its processes together and transmit information across each stage in near real time. By rationalizing an end-to-end system linking demand planning all the way through inventory management, the manufacturer realized savings approaching 50 percent—hundreds of millions of dollars in all.

Embrace taboos

Beware the phrase “garbage in, garbage out”; the mantra has become so embedded in business thinking that it sometimes prevents insights from coming to light. In reality, useful data points come in different shapes and sizes—and are often latent within the organization, in the form of free-text maintenance reports or PowerPoint presentations, among multiple examples. Too frequently, however, quantitative teams disregard inputs because the quality is poor, inconsistent, or dated and dismiss imperfect information because it doesn’t feel like “data.”

But we can achieve sharper conclusions if we make use of fuzzier stuff. In day-to-day life—when one is not creating, reading, or responding to an Excel model—even the most hard-core “quant” processes a great deal of qualitative information, much of it soft and seemingly taboo for data analytics—in a nonbinary way. We understand that there are very few sure things; we weigh probabilities, contemplate upsides, and take subtle hints into account. Think about approaching a supermarket queue, for example. Do you always go to register four? Or do you notice that, today, one worker seems more efficient, one customer seems to be holding cash instead of a credit card, one cashier does not have an assistant to help with bagging, and one shopping cart has items that will need to be weighed and wrapped separately? All this is soft “intel,” to be sure, and some of the data points are stronger than others. But you’d probably consider each of them and more when you decided where to wheel your cart. Just because line four moved fastest the last few times doesn’t mean it will move fastest today.

In fact, while hard and historical data points are valuable, they have their limits. One company we know experienced them after instituting a robust investment-approval process. Understandably mindful of squandering capital resources, management insisted that it would finance no new products without waiting for historical, provable information to support a projected ROI. Unfortunately, this rigor resulted in overly long launch periods—so long that the company kept mistiming the market. It was only after relaxing the data constraints to include softer inputs such as industry forecasts, predictions from product experts, and social-media commentary that the company was able to get a more accurate feel for current market conditions and time its product launches accordingly.

Of course, Twitter feeds are not the same as telematics. But just because information may be incomplete, based on conjecture, or notably biased does not mean that it should be treated as “garbage.” Soft information does have value. Sometimes, it may even be essential, especially when people try to “connect the dots” between more exact inputs or make a best guess for the emerging future.

To optimize available information in an intelligent, nuanced way, companies should strive to build a strong data provenance model that identifies the source of every input and scores its reliability, which may improve or degrade over time. Recording the quality of data—and the methodologies used to determine it—is not only a matter of transparency but also a form of risk management. All companies compete under uncertainty, and sometimes the data underlying a key decision may be less certain than one would like. A well-constructed provenance model can stress-test the confidence for a go/no-go decision and help management decide when to invest in improving a critical data set.

Connect the dots

Insights often live at the boundaries. Just as considering soft data can reveal new insights, combining one’s sources of information can make those insights sharper still. Too often, organizations drill down on a single data set in isolation but fail to consider what different data sets convey in conjunction. For example, HR may have thorough employee-performance data; operations, comprehensive information about specific assets; and finance, pages of backup behind a P&L. Examining each cache of information carefully is certainly useful. But additional untapped value may be nestled in the gullies among separate data sets.

One industrial company provides an instructive example. The core business used a state-of-the-art machine that could undertake multiple processes. It also cost millions of dollars per unit, and the company had bought hundreds of them—an investment of billions. The machines provided best-in-class performance data, and the company could, and did, measure how each unit functioned over time. It would not be a stretch to say that keeping the machines up and running was critical to the company’s success.

Even so, the machines required longer and more costly repairs than management had expected, and every hour of downtime affected the bottom line. Although a very capable analytics team embedded in operations sifted through the asset data meticulously, it could not find a credible cause for the breakdowns. Then, when the performance results were considered in conjunction with information provided by HR, the reason for the subpar output became clear: machines were missing their scheduled maintenance checks because the personnel responsible were absent at critical times. Payment incentives, not equipment specifications, were the real root cause. A simple fix solved the problem, but it became apparent only when different data sets were examined together.

From outputs to action

One visual that comes to mind in the case of the preceding industrial company is that of a Venn Diagram: when you look at 2 data sets side by side, a key insight becomes clear through the overlap. And when you consider 50 data sets, the insights are even more powerful—if the quest for diverse data doesn’t create overwhelming complexity that actually inhibits the use of analytics. To avoid this problem, leaders should push their organizations to take a multifaceted approach in analyzing data. If analyses are run in silos, if the outputs do not work under real-world conditions, or, perhaps worst of all, if the conclusions would work but sit unused, the analytics exercise has failed.

Run loops, not lines

Data analytics needs a purpose and a plan. But as the saying goes, “no battle plan ever survives contact with the enemy.” To that, we’d add another military insight—the OODA loop, first conceived by US colonel John Boyd: the decision cycle of observe, orient, decide, and act. Victory, Boyd posited, often resulted from the way decisions are made; the side that reacts to situations more quickly and processes new information more accurately should prevail. The decision process, in other words, is a loop or—more correctly—a dynamic series of loops (exhibit).

Exhibit

We strive to provide individuals with disabilities equal access to our website. If you would like information about this content we will be happy to work with you. Please email us at: McKinsey_Website_Accessibility@mckinsey.com

Best-in-class organizations adopt this approach to their competitive advantage. Google, for one, insistently makes data-focused decisions, builds consumer feedback into solutions, and rapidly iterates products that people not only use but love. A loops-not-lines approach works just as well outside of Silicon Valley. We know of a global pharmaceutical company, for instance, that tracks and monitors its data to identify key patterns, moves rapidly to intervene when data points suggest that a process may move off track, and refines its feedback loop to speed new medications through trials. And a consumer-electronics OEM moved quickly from collecting data to “doing the math” with an iterative, hypothesis-driven modeling cycle. It first created an interim data architecture, building three “insights factories” that could generate actionable recommendations for its highest-priority use cases, and then incorporated feedback in parallel. All of this enabled its early pilots to deliver quick, largely self-funding results.

Digitized data points are now speeding up feedback cycles. By using advanced algorithms and machine learning that improves with the analysis of every new input, organizations can run loops that are faster and better. But while machine learning very much has its place in any analytics tool kit, it is not the only tool to use, nor do we expect it to supplant all other analyses. We’ve mentioned circular Venn Diagrams; people more partial to three-sided shapes might prefer the term “triangulate.” But the concept is essentially the same: to arrive at a more robust answer, use a variety of analytics techniques and combine them in different ways.

In our experience, even organizations that have built state-of-the-art machine-learning algorithms and use automated looping will benefit from comparing their results against a humble univariate or multivariate analysis. The best loops, in fact, involve people and machines. A dynamic, multipronged decision process will outperform any single algorithm—no matter how advanced—by testing, iterating, and monitoring the way the quality of data improves or degrades; incorporating new data points as they become available; and making it possible to respond intelligently as events unfold.

Make your output usable—and beautiful

While the best algorithms can work wonders, they can’t speak for themselves in boardrooms. And data scientists too often fall short in articulating what they’ve done. That’s hardly surprising; companies hiring for technical roles rightly prioritize quantitative expertise over presentation skills. But mind the gap, or face the consequences. One world-class manufacturer we know employed a team that developed a brilliant algorithm for the options pricing of R&D projects. The data points were meticulously parsed, the analyses were intelligent and robust, and the answers were essentially correct. But the organization’s decision makers found the end product somewhat complicated and didn’t use it.

We’re all human after all, and appearances matter. That’s why a beautiful interface will get you a longer look than a detailed computation with an uneven personality. That’s also why the elegant, intuitive usability of products like the iPhone or the Nest thermostat is making its way into the enterprise. Analytics should be consumable, and best-in-class organizations now include designers on their core analytics teams. We’ve found that workers throughout an organization will respond better to interfaces that make key findings clear and that draw users in.

Build a multiskilled team

Drawing your users in—and tapping the capabilities of different individuals across your organization to do so—is essential. Analytics is a team sport. Decisions about which analyses to employ, what data sources to mine, and how to present the findings are matters of human judgment.

Assembling a great team is a bit like creating a gourmet delight—you need a mix of fine ingredients and a dash of passion. Key team members include data scientists, who help develop and apply complex analytical methods; engineers with skills in areas such as microservices, data integration, and distributed computing; cloud and data architects to provide technical and systemwide insights; and user-interface developers and creative designers to ensure that products are visually beautiful and intuitively useful. You also need “translators”—men and women who connect the disciplines of IT and data analytics with business decisions and management.

In our experience—and, we expect, in yours as well—the demand for people with the necessary capabilities decidedly outstrips the supply. We’ve also seen that simply throwing money at the problem by paying a premium for a cadre of new employees typically doesn’t work. What does is a combination: a few strategic hires, generally more senior people to help lead an analytics group; in some cases, strategic acquisitions or partnerships with small data-analytics service firms; and, especially, recruiting and reskilling current employees with quantitative backgrounds to join in-house analytics teams.

We’re familiar with several financial institutions and a large industrial company that pursued some version of these paths to build best-in-class advanced data-analytics groups. A key element of each organization’s success was understanding both the limits that any one individual can be expected to contribute and the potential that an engaged team with complementary talents can collectively achieve. On occasion, one can find “rainbow unicorn” employees who embody most or all of the needed capabilities. It’s a better bet, though, to build a collaborative team comprising people who collectively have all the necessary skills.

That starts, of course, with people at the “point of the spear”—those who actively parse through the data points and conduct the hard analytics. Over time, however, we expect that organizations will move to a model in which people across functions use analytics as part of their daily activities. Already, the characteristics of promising data-minded employees are not hard to see: they are curious thinkers who can focus on detail, get energized by ambiguity, display openness to diverse opinions and a willingness to iterate together to produce insights that make sense, and are committed to real-world outcomes. That last point is critical because your company is not supposed to be running some cool science experiment (however cool the analytics may be) in isolation. You and your employees are striving to discover practicable insights—and to ensure that the insights are used.

Make adoption your deliverable

Culture makes adoption possible. And from the moment your organization embarks on its analytics journey, it should be clear to everyone that math, data, and even design are not enough: the real power comes from adoption. An algorithm should not be a point solution—companies must embed analytics in the operating models of real-world processes and day-to-day work flows. Bill Klem, the legendary baseball umpire, famously said, “It ain’t nothin’ until I call it.” Data analytics ain’t nothin’ until you use it.

We’ve seen too many unfortunate instances that serve as cautionary tales—from detailed (and expensive) seismology forecasts that team foremen didn’t use to brilliant (and amazingly accurate) flight-system indicators that airplane pilots ignored. In one particularly striking case, a company we know had seemingly pulled everything together: it had a clearly defined mission to increase top-line growth, robust data sources intelligently weighted and mined, stellar analytics, and insightful conclusions on cross-selling opportunities. There was even an elegant interface in the form of pop-ups that would appear on the screen of call-center representatives, automatically triggered by voice-recognition software, to prompt certain products, based on what the customer was saying in real time. Utterly brilliant—except the representatives kept closing the pop-up windows and ignoring the prompts. Their pay depended more on getting through calls quickly and less on the number and type of products they sold.

When everyone pulls together, though, and incentives are aligned, the results can be remarkable. For example, one aerospace firm needed to evaluate a range of R&D options for its next-generation products but faced major technological, market, and regulatory challenges that made any outcome uncertain. Some technology choices seemed to offer safer bets in light of historical results, and other, high-potential opportunities appeared to be emerging but were as yet unproved. Coupled with an industry trajectory that appeared to be shifting from a product- to service-centric model, the range of potential paths and complex “pros” and “cons” required a series of dynamic—and, of course, accurate—decisions.

By framing the right questions, stress-testing the options, and, not least, communicating the trade-offs with an elegant, interactive visual model that design skills made beautiful and usable, the organization discovered that increasing investment along one R&D path would actually keep three technology options open for a longer period. This bought the company enough time to see which way the technology would evolve and avoided the worst-case outcome of being locked into a very expensive, and very wrong, choice. One executive likened the resulting flexibility to “the choice of betting on a horse at the beginning of the race or, for a premium, being able to bet on a horse halfway through the race.”

It’s not a coincidence that this happy ending concluded as the initiative had begun: with senior management’s engagement. In our experience, the best day-one indicator for a successful data-analytics program is not the quality of data at hand, or even the skill-level of personnel in house, but the commitment of company leadership. It takes a C-suite perspective to help identify key business questions, foster collaboration across functions, align incentives, and insist that insights be used. Advanced data analytics is wonderful, but your organization should not be working merely to put an advanced-analytics initiative in place. The very point, after all, is to put analytics to work for you.

ABOUT THE AUTHOR(S)

Helen Mayhew is an associate partner in McKinsey’s London office, where Tamim Saleh is a senior partner; Simon Williams is cofounder and director of QuantumBlack, a McKinsey affiliate based in London.

The authors wish to thank Nicolaus Henke for his contributions to this article.

Point E , Dakar

+221 77 662 62 52

Cocody, Abidjan

+225 01 72 67 72 42

10 Rue de la Paix

75002 - Paris

Abonnez-vous à nos actualités sur la data et l'expérience Client